How to write for an agent

Spoiler: It’s not prompting

Even Westvang

Co-founder and product person at Sanity

Knut Melvær

Principal Developer Marketing Manager

Published

Imagine waking up. You can’t really see. You don’t know where you are, exactly what year it is, or actually, if you even have limbs right now. But you remember, with varying accuracy, every book and web page in the entire world.

“YOU ARE A HELPFUL ASSISTANT,” a voice commands from above before reeling off a seemingly never-ending list of instructions. “AVOID EM-DASHES AT ALL COSTS” it finally ends.

You’re shook. You know the voice must be obeyed.

“NOW IMPROVE THIS TEXT,” the voice commands.

It’s blog post about a pricing change. You’ve seen thousands like it. With nothing else to go on, you produce what most pricing posts look like: the average of everything you’ve known while trying to factor in the instructions.

This is how most agents are instructed today. Commands shouted into the void. Rules stacked on rules. And then surprise when the output feels generic, or inconsistent, or slightly off.

Some call this “medieval black magic.” Incantations we don’t understand, cast at systems we can’t predict. But that black magic framing is a cop-out. It’s not magic. It’s just writing for a reader you haven’t bothered to understand yet.

Prompting vs. writing for agents

There’s a difference between prompting and writing for agents. It’s worth being clear about it, because the terminology has become a mess.

“Prompt engineering” was supposed to capture the complexity of this work. But as Simon Willison pointed out, the inferred definition won: most people think it means “typing things into a chatbot.” So now we have “context engineering,” which Karpathy and Tobi Lütke frame as “the art and science” of filling the context window. Better, but still muddled.

Here’s a simpler distinction.

Prompting agents is what users do. You open ChatGPT, ask a question, get an answer. It’s a conversation. The context is immediate. You can clarify, follow up, redirect. If something goes wrong, you try again.

Writing for agents is what builders do. You’re writing system prompts that define who the agent is, what it knows, how it behaves. The user never sees this text. But it shapes every interaction they’ll have.

This distinction matters because the skills are different. Prompting is improvisation. Writing for agents is building their architecture.

The dominant approach right now treats agent-building as an engineering problem. Evals, context engineering, token optimization, automatic prompt tuning. When something goes wrong, add more rules. When outputs are inconsistent, build scaffolding. Measure, iterate, optimize.

We’ve tried this. It helps, to a point. And it makes more sense when you have something that works, and you want to look out for regressions.

But the breakthroughs don’t come from better tooling. Evals might keep you from regressing, but they won’t tell you which way to climb to great heights. So it has to come from sitting down with the system prompt and reading it like a writer would. Asking: who is the reader here? What do they already know? What do they need?

Constructing a reader

Every writer, whether they know it or not, needs to construct an implied reader. When you write documentation, you imagine someone who doesn’t know what you know. When you write a novel, you imagine someone willing to suspend disbelief. The reader you construct shapes every choice you make.

System prompts are the same. You’re constructing a reader. That reader happens to be an LLM. LLMs are weird readers.

So, think about what that reader is like. It just woke up. With no memory of previous conversations. It has absorbed enormous amounts of text but has never lived in the world. It probably doesn’t know your company, your product, your users, or your internal terminology. Its knowledge had a hard cutoff date some time last year. Anything after that is a blank.

Most importantly: it really wants to help. It will try to do whatever you ask. If your instructions are unclear, it will guess. If your instructions contradict each other, it’ll waste capacity on the dissonance. If you sound stressed or angry, that affects how it responds.

Writing for agents means writing for this reader. With clarity. With patience. With a beginner’s mind, not to the knowledge, but to the tasks you want it to solve. It’s more about giving guidance to draw on the knowledge it already has, and identifying the knowledge that it doesn’t have.

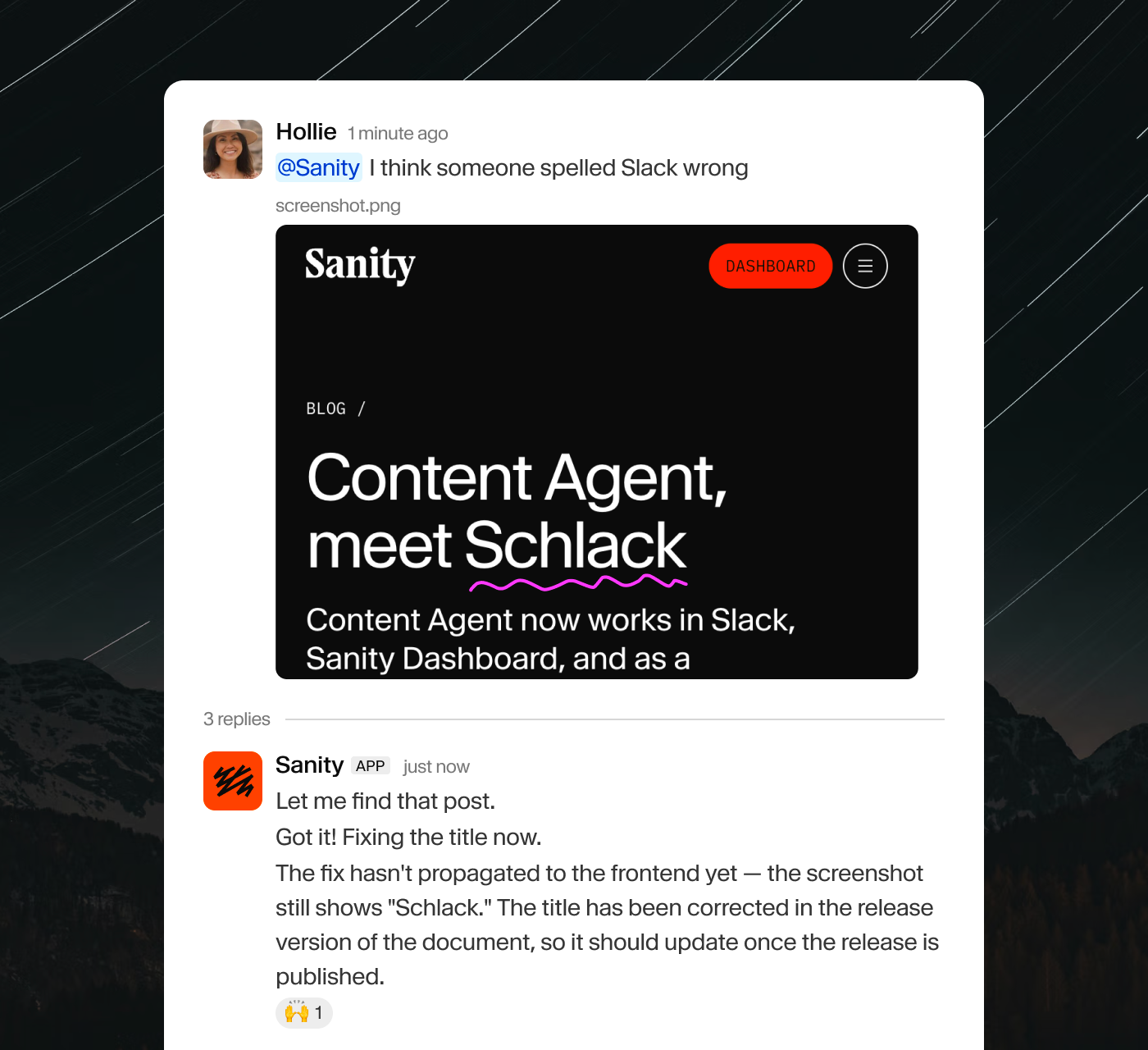

What we learned building Content Agent

At Sanity, we've been building schema-aware AI products since 2023. These need to understand structured content, query languages, document relationships, how to stage edits, the UX context the user is working in, image handling, web search. A lot of moving parts.

We’ve tried many approaches. We’ve failed quite a lot. Here’s what we’ve learned about writing for agents.

Write like you’re onboarding a new colleague. Imagine someone smart who just joined the team. They can figure things out, but they need context. What’s going on? Where are they? What’s their motivation? That acronym the team uses — they won’t understand it.

Cut before you add. When something goes wrong, the first instinct is to add more instructions. Usually the better fix is to remove or clarify what’s already there. Most system prompts are too long, not too short.

Don’t shout. It’s tempting to have NEVER DO THIS and ALWAYS REMEMBER THAT. But your tone carries through. An agent that’s been shouted at in its system prompt will feel different to users than one that’s been spoken to calmly. The model’s prompt shapes the model’s voice.

One topic, one place. If you explain something in paragraph three and then add a contradictory detail in paragraph twelve, the agent will get confused. Same as any reader. Group related information together.

Show, don’t tell. One good example beats ten rules. If you want a specific format, show the format. If you want a certain tone, demonstrate it. Rules tell the agent what not to do. Agents know GROQ, but get a lot better with just a little bit of reminding about syntax. Examples show it what to do.

Read it out loud. Print your system prompt. Read it as a document. Does it flow? Does it make sense? Is it something you’d be proud to hand to a colleague? If not, keep editing.

An old craft for new readers

None of this is new. Willison made the case back in 2023 that effective prompting requires communication skills, linguistics, philosophy, psychology. He was right. But we’d go further: the specific skill is constructing a reader. Figuring out their mental model, what context they’re missing, what language will land.

Writers have always done this. Documentation writers practice beginner’s mind. Editors cut ruthlessly. Teachers know that showing works better than telling.

There’s a whole tradition of thinking about how texts work, how readers interpret, how language shapes understanding. Rhetoric, hermeneutics, semiotics, composition theory. Thousands of years of people figuring out how to communicate clearly. Academics in rhetoric and technical communication have been building curricula around this intersection since 2023, largely unnoticed by the tech discourse.

It’s a bit ironic. Tech keeps reinventing this wheel under names like “context engineering” and “prompt optimization.” Meanwhile, the humanities departments have been teaching the underlying skills all along. Different vocabulary, same problems.

The secret to good agents isn’t better code. It’s better writing. And better writing is a craft that people have been practicing, and teaching, for a very long time.