Your agent needs better content. Here's how to give it.

Your agent misses products, returns stale prices, and can’t filter a catalog. The problem is how the agent accesses your content.

Even Westvang

Co-founder and product person at Sanity

Knut Melvær

Principal Developer Marketing Manager

Published

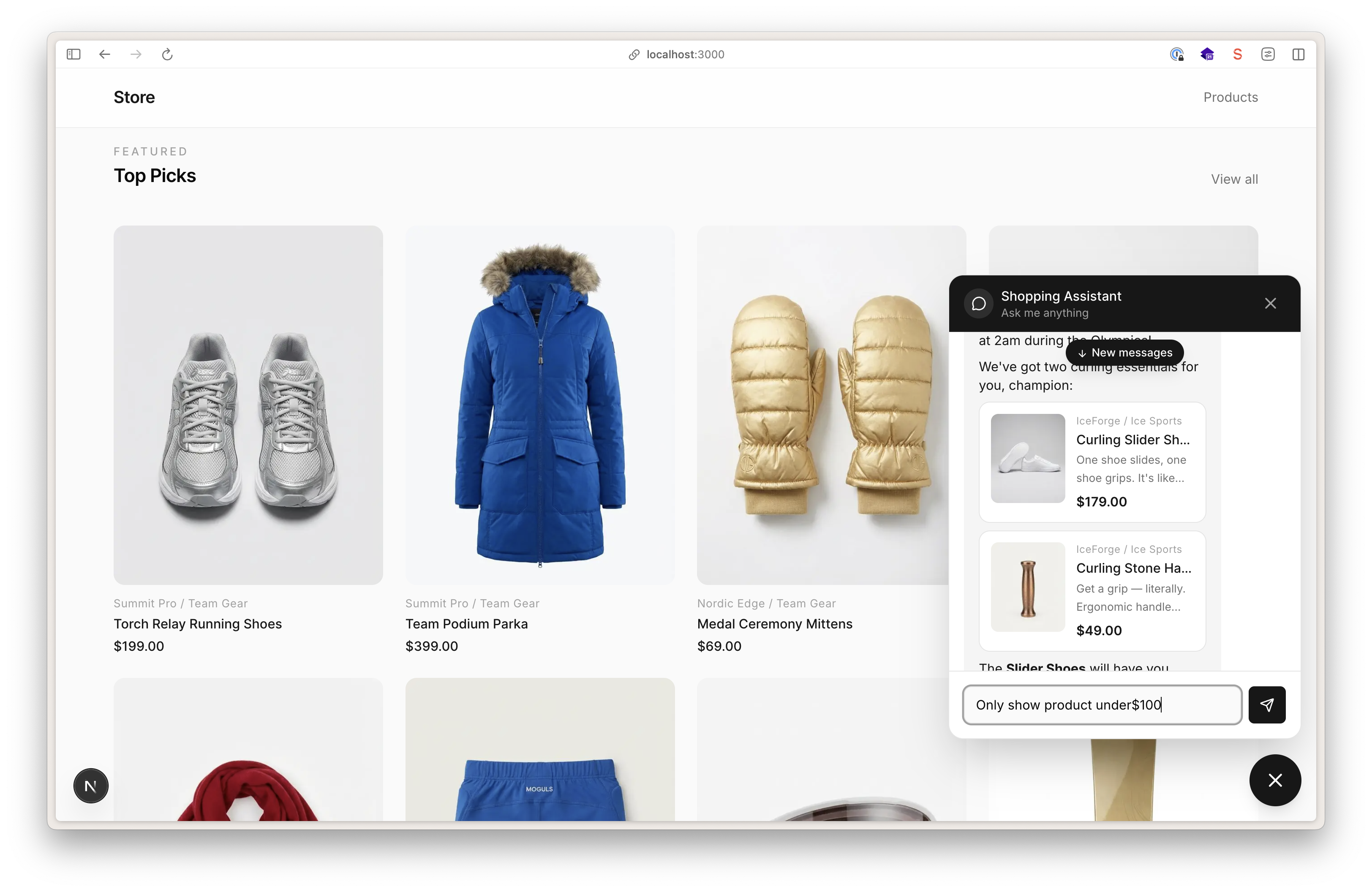

You've built an agent. It works in the demo. It answers product questions, surfaces relevant docs, helps customers find what they need. Confetti! You launch it to your site.

Then someone asks about the price of the blue running shoe in size 10, and it confidently returns the wrong number. Or it comes up with a product that doesn't exist. Or it suggests something that's been discontinued for three months. You add prompts like “DO NOT HALLUCINATE”. But it kinda doesn’t get better.

Everyone blames the model or gets bogged down in prompt engineering. But the model isn't the problem. There is only so much you can do with prompts.

The content backend is usually where it falls apart.

When “close enough” isn't close enough

RAG (retrieval-augmented generation) is the right idea: instead of relying on training knowledge, you retrieve real data and feed it to the model alongside the user's question. The question is what that retrieval looks like.

The first version most teams build: vectorize your content into text chunks, store the embeddings, and when the agent needs information, find the most semantically similar chunks and stuff them into the prompt. It's a reasonable starting point. For a lot of use cases, it gets you surprisingly far.

But the last year changed what agents can do. They went from "consume this context window" to "use these tools to go get what you need." That changes what good retrieval looks like.

This is where content as embeddings only takes you so far, and can turn out directly unhelpful for the folks you are trying to help.

Precision questions have fuzzy answers. A customer asks "what's the price of the blue trail runner in size 10?" Your embedding index returns the five most semantically similar chunks about trail running shoes. Maybe one of them contains the price. Maybe it contains last month's price. Maybe it contains the price for a different color-way. The model picks whichever chunk looks most relevant and answers with confidence. Sometimes it's right. Sometimes it's confidently wrong, which is worse than saying "I don't know."

Structure gets flattened. Your content has types, relationships, and attributes. Products have variants. Variants have prices, sizes, and inventory status. Brands relate to products. Products relate to reviews. When you vectorize all of that into text chunks, you flatten it. The embedding for a product page captures the vibe of that page, not the fact that variants[color == "blue" && size == "10"].price is $129. An agent that needs to filter, compare, or traverse relationships is out of luck.

Staleness is invisible. Someone on your team updates a price or discontinues a product. If your embedding pipeline runs on a schedule (and most do: hourly, daily, sometimes weekly), the agent keeps serving old data in the gap. Unlike a broken API that returns an error, a stale embedding returns a confident answer. There's no signal that anything is wrong. Money out the window.

There's no write path. Pure RAG is read-only. The agent can retrieve text, but it can't act on your content model. It can't filter a product listing, apply a discount code, or update a shopping cart. For agents that need to do things, not just answer questions, text retrieval is only half the picture.

None of these are exotic edge cases. They're the normal failure modes of agents in production. The teams we've been working with all hit them, usually within the first few weeks of real user traffic.

What Agent Context does differently

Agent Context is still RAG. The retrieval just got a lot smarter and nimbler.

Today, we're shipping a "just add water" remote MCP endpoint that gives AI agents structured access to your Sanity Content Lake (that's our hosted content backend, if you're new here). MCP (Model Context Protocol) is an emerging standard for connecting AI agents to external tools and data sources. Think of it as a way for your agent to call functions on a remote server, similar to how a browser calls APIs.

The Agent Context MCP endpoint gives your agent two capabilities instead of just one: Semantic search and structured content retrieval. Built on top of out-of-the-box features in Content Lake.

Semantic search via embeddings

Agents can use semantic search for discovery. "Find products similar to trail running shoes" works even if your content never uses that exact phrase. This is the part that RAG gets right, and we kept it.

A customer asks your agent: "Do you have lightweight trail running shoes under $150?" With text-chunk retrieval, you'd search for similar text and hope a relevant chunk surfaces. With Agent Context, the agent writes a GROQ query that combines structure with meaning:

*[_type == "product" && category == "shoes" && price < 150]

| score(text::semanticSimilarity("lightweight trail runner"))

| order(_score desc)

{ title, price, description }[0...5]And back it gets structured content so it doesn’t need to suss out what’s going on from some free text search result:

[

{ "title": "Trailfly Ultra G 280", "price": 140, "description": "Minimalist fell runner..." },

{ "title": "Cloudventure Peak", "price": 129, "description": "Lightweight alpine trail shoe..." }

]The category and price filters are exact. The semantic ranking finds the right products even if they're called "minimalist fell runners" in your content.

Best thing? This just works out of the box. You do not need to set up anything. Content updates gets automatically re-indexed into embeddings. And if you want more control, you’ll be able to configure what goes into embeddings too.

GROQ queries for structured access

When the agent needs a precise fact, not a fuzzy match. "What's the return policy for electronics?"

*[_type == "policy" && department == "electronics"][0]

{ title, returnWindow, conditions }And back comes the exact information it needs to be useful to the customer:

{ "title": "Electronics return policy", "returnWindow": "30 days", "conditions": "Original packaging required" }No embedding needed. The agent queries your content model directly, like any other API client.

This only works because Sanity stores content as structured data with types, fields, and references, not as a big pile of pages. That's what makes it possible to query by type, filter by attribute, and traverse relationships.

To see a full working example, check out the ecommerce agent use case guide.

Getting started with Agent Context

The embeddings live in the Content Lake alongside your content. When someone publishes a price change, the embeddings update. No reindexing pipeline. No sync lag. No "why is the agent still showing yesterday's price" Slack thread at 3pm.

If you're using a skills-compatible coding tool (Cursor, Claude Code, v0, or similar):

npx skills add sanity-io/agent-context --all

This gives your agent a skill that scaffolds an Agent Context connection to your Sanity project. Tell it "build a Sanity-powered agent for my site" and it will set up the integration, connected to your dataset, with schema-aware tools ready to use.

For full setup details, starters, and framework-specific guides, see the Getting Started guide. Agent Context uses embeddings in the Content Lake; for pricing details, see the pricing page.

You already know how to build this

We've been testing Agent Context with customers building production agents ahead of launch. When agents have structured content access instead of just text retrieval, accuracy goes up. These are production deployments handling real customer queries.

The patterns: customer support agents that actually know your product catalog. Product advisors that filter and recommend based on real attributes, not vibes. Internal tools where agents query your content model the same way your apps do.

If you've built apps on Sanity, you already know how to build agents on Sanity. You know how to model content, write GROQ queries, and set up auth. The pieces that make a great agent (structured data, typed schemas, real-time content) are the same pieces you've been working with. Agent Context is the connection layer.

And if you're doing authenticated experiences, you're closer than you think to agents that can take actions for logged-in users. Knowing how to handle auth and state in your apps is most of what you need.

The bigger picture

Agent Context is part of Sanity's broader AI capabilities. If you're already using Sanity, your content is already structured. You've already modeled your business in a way that agents can work with. Agent Context is the connection layer that makes that investment pay off in a new way.

Your websites, your apps, and now your agents. Same Content Lake.